Method Overview

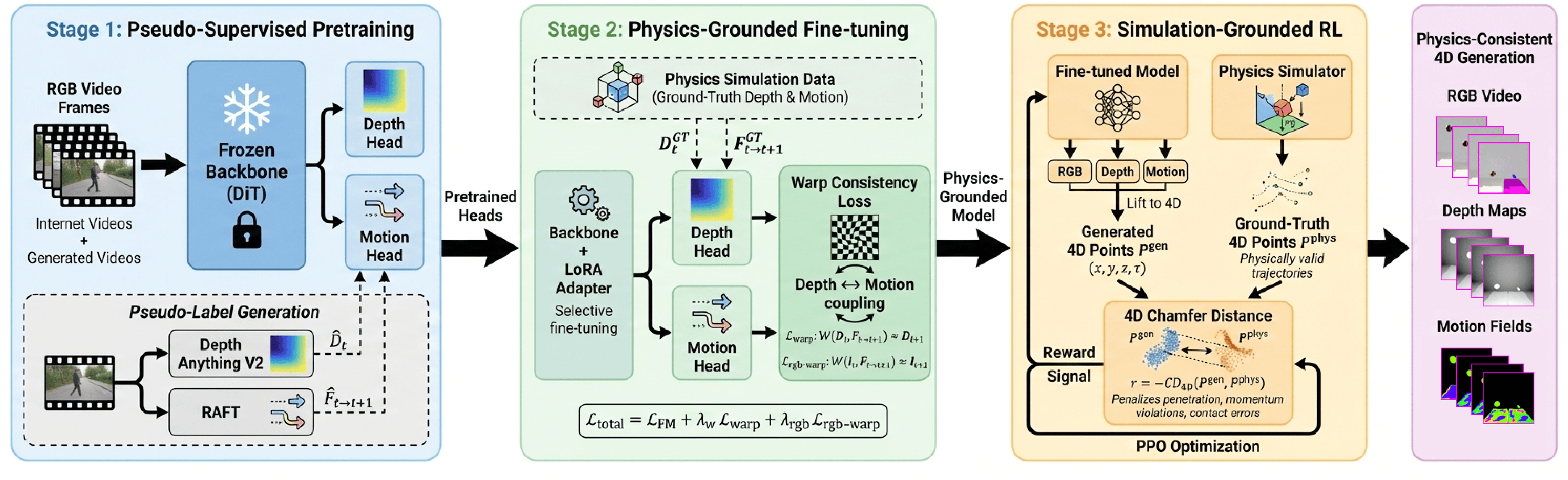

Phys4D converts a pretrained video diffusion model into a physics-consistent 4D world model via a three-stage training paradigm.

Pseudo-Supervised Pretraining

We bootstrap geometry and motion representations using large-scale pseudo-supervision from off-the-shelf depth and optical flow estimators. The DiT backbone is frozen while auxiliary heads learn to predict depth maps and motion fields.

Physics-Grounded Fine-tuning

We selectively finetune using physics simulation data with ground-truth annotations. A warp consistency loss couples depth and motion predictions to enforce temporally coherent 3D structure and physically plausible dynamics.

Simulation-Grounded RL

We apply reinforcement learning with physics simulator rewards to correct residual physical violations. The reward is based on 4D Chamfer Distance between generated and physically valid object trajectories.